Cables are typically cheaper than the devices they connect together, but good cable quality is key to make everything work seamlessly. Cables inherently introduce loss onto the communication signal they carry, and have a filtering effect especially for higher frequency components in the signal. As loss levels and loss variation increase, devices have a harder time maintaining a high quality stable communication link across the cable.

In an ideal world, there is minimal impedance variation through a cable resulting in loss which gradually increases for higher frequencies.

In the real world, the cable's material, connector-wire assembly, and length have a big impact on impedance levels and variations.

These impedance fluctuations take a toll on the signal, creating different signal losses through the cable at different frequencies. The greater the amounts of loss and variations in loss, the harder it becomes for devices to maintain a stable communication link through the cable.

Investments in good quality cables is like buying insurance. The higher the quality of the cable, the more resilient the communication between a wide range of device types and connection environments. You don't want to increase the risk of having a bad connection just because you saved a small percentage of the overall system cost by using cheap low quality cables.

But how can you tell the difference between a good quality and bad quality cable? Traditionally cable testing has been very complex, difficult to perform, and hard to understand. It's not surprising then that many companies and users don't actually know the quality of the cable they are using and how to compare the quality between different cables.

With the GRL DI Tester from Granite River Labs, testing becomes a lot simpler and more accessible, allowing everyone to easily check and compare cable quality. Because it is so quick and easy to use, the GRL DI Tester was used to test a large number of cables, and uses big data techniques to analyze and compare the cables across 4 key measures: Loss, Linearity, Margin, and Consistency.

The Loss measure captures the absolute loss through the cable across different frequencies, and compares which cables generally have more loss than others.

The Linearity measure represents the degree to which loss (dB) increases linearly as frequency increases, and compares which cables have more linear loss profiles than others.

The Margin measure reflects how well loss is meeting the specifications requirements, and compares which cables are better at meeting the spec than others.

The Consistency measure calculates the loss variation across different wires (lanes) and samples of the same cable model- ideally all lanes are designed and produced such that there is very minimal variation. The Consistency measure also compares which cables have more consistent lane to lane loss behavior than others.

The tested cables are then evenly divided into quintiles for each measure, with the top quintile representing the cables that have the best 20% performance for that measure and the bottom quintile representing the cables that have the worst 20% performance. The top quintile is assigned 5 stars while the bottom quintile is assigned 1 star.

The Overall score is the average of all the quintiles generated for each measure, and the results are again scaled evenly so that 5 stars goes to the top 20%, 4 stars goes to the next best 20%, etc.

In addition to the stars scoring, you can visually see the relative distribution of other cables for each measure, and how a specific cable (shown in red) falls within that distribution.

Click here to see top scoring USB cables

Click here to see top scoring HDMI cables

Click here to see top scoring cable factories

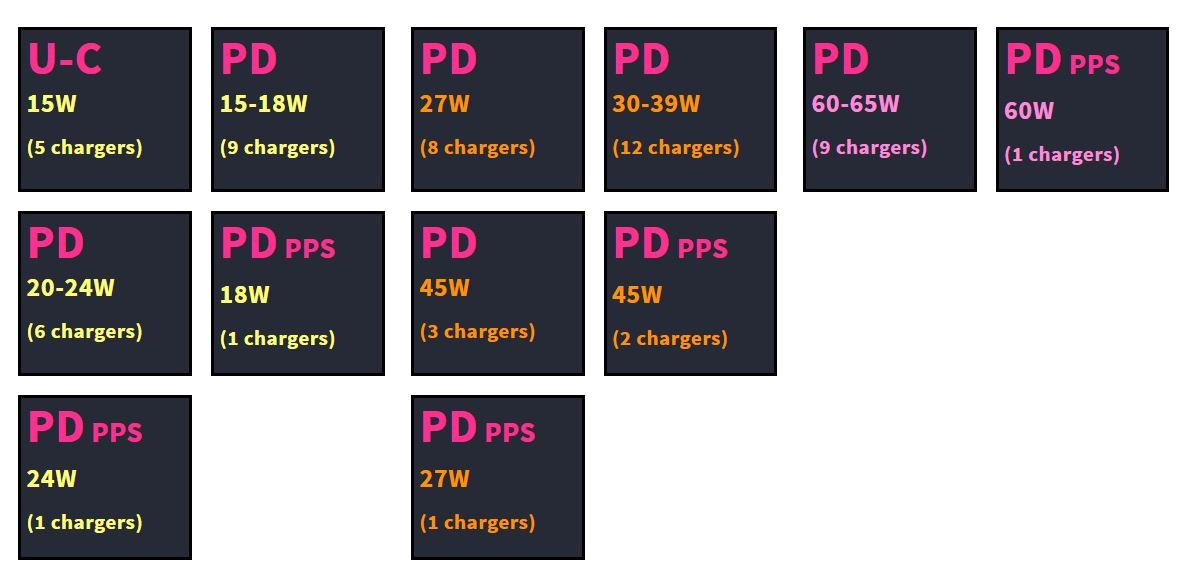

For similar benchmarking analysis and scoring data for chargers, please click here.

GTrusted

GTrusted